Chapter 4

Problems with the Big Bang

In this chapter we shall explore some of the difficulties that modern cosmology and the big bang have.

In chapter 2 we saw that three evidences for the big bang are usually given, the CBR, the expansion of the universe, and the abundances of the light elements. In that chapter it was argued that the first evidence is a clean prediction of the big bang, but that the last two are not, but instead are more aptly described as explanatory in nature. In this chapter we shall explore some of the difficulties that modern cosmology and the big bang have.

Halton Arp

Since the late 1960s one of the more vocal critics of standard cosmology has been Halton “Chip” Arp. In two popular-level books,1 Arp has laid out many of his objections. Much of his work concerns quasars. The first quasars were point radio sources identified in 1961. They appeared to be faint blue stars with a few unidentified emission lines. In 1963 Martin Schmidt showed that the spectral lines in one of these “radio stars” were hydrogen emission lines normally found in the UV part of the spectrum. To be seen in the visible part of the spectrum, the spectral lines would have to be shifted by 17%. This is a huge redshift, which meant that if the redshift was cosmological, the object had to be more than a billion light years away. The observed brightness meant that the radio star had to be far brighter than a typical bright galaxy.

Quasar

At the same time, archival measurements of the brightness variations of the radio star over many years showed that the light irregularly varied over a time of only a few months. This was interpreted to mean that the object was at most only a few light months (the distance that light travels in a month) in size. This is required because any variation in brightness must be caused by some mechanism. There must be some “switch” that tells the material in the quasar to get brighter and then to get fainter. A signal must transmit this information. For a small object, such a signal can pass throughout the object virtually instantaneously. However, for a large object there will be some delay in transmitting this signal. The length of time for signal propagation, and hence the period of variability, is limited by the speed of the signal and the size of the object. The fastest known speed of propagation is the speed of light. If an object takes a month to vary in brightness, then it can be no more than a light month in size. This is an upper limit—the actual size is probably less.

Simply put, this radio star must be extremely bright and small. How can something be so small and yet so powerful? The new name, quasi-stellar object (QSO), was coined and that name was eventually contracted to “quasar.”

Over the ensuing years many more quasars were discovered (there are now over 20,000 known), and naturally much more data has been collected. For instance, the first quasars were radio noisy, that is, they gave off much energy in the radio part of the spectrum. However, many quasars that give off little or no radio emission are now known. They are called radio quiet. Quasars have been found with various redshifts, but all quasar redshifts are very high. Assuming that the Hubble relation is valid, their high redshifts suggest that quasars are at huge distances. Many quasars appear to have fuzzy glows around them, which astronomers think are the light of galaxies that host QSO’s.

The picture that has emerged is that quasars are the cores of galaxies. Indeed, the cores of many galaxies without attendant quasars are found to exhibit quasar-like properties. A theory has been developed to explain how quasars can be so small and yet so powerful. We think that a quasar is a massive black hole containing millions of solar masses of material that is accreting matter from an orbiting disk. As the material descends into the steep gravitational potential well of the black hole, a huge amount of energy is released. Similar theories have been developed to explain somewhat less exotic goings on in galactic nuclei. In recent years observations made with the Hubble Space Telescope have revealed strong evidence for massive black holes in nearby galaxies.

In summation, astronomers generally think that quasars are extremely distant, bright, small objects. The only theory we know that can explain the properties of quasars is that they are powered by super massive black holes. Arp has called this entire picture of quasars into question. He has suggested that quasar redshifts are not cosmological, and hence quasars are not that far away, and they are not that intrinsically bright. If this is true, then there is no great mystery about what is powering quasars. Arp is doing no less or more than doubting the principle that redshifts are cosmological. How has he done this? He has offered several lines of evidence, which we will now discuss.

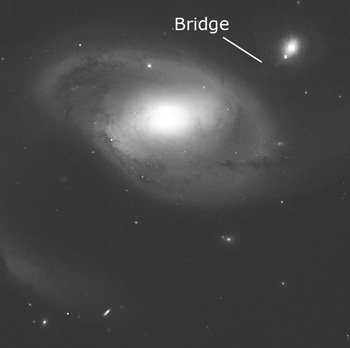

Arp has taken photographs of several galaxies that appear to be interacting with other galaxies or with quasars. One of the best examples is NGC 4319, which appears to have a luminous bridge between itself and a nearby galaxy. Arp argues that the luminous bridge is material that is streaming from one galaxy to the other. To do so, the two galaxies must be at about the same distance from us. However, when the redshifts of the two galaxies are measured, they are very different, suggesting (via the Hubble relation) that the two galaxies lie at vastly different distances. If this is true, then the two galaxies cannot be interacting as suggested by the photographs. How have Arp’s critics responded to this? They counter that the luminous bridge is an artifact or an illusion. The question really comes down to whether you believe what the redshifts tell us or if you believe what the images seem to tell us.

Image courtesy of NASA

One of the best examples of galactical interaction is NGC 4319, which appears to have a luminous bridge between itself and Markarian 205.

Arp has found other galaxies and/or quasars that show what appear to be arms of material from one object to the other. In some cases these arms are bent at peculiar angles that suggest a gravitational interaction between the objects. In every case the objects have radically different redshifts that would mean that the objects have very different distances if the redshifts are cosmological. Arp’s critics respond that while these crooked arms of material are real, the objects in question are chance alignments. That is, the two objects appear to be interacting, because they lie in exactly the same direction, and one of the objects has a peculiar arm that appears to terminate on the other object. Arp counters by asking what is the probability for such chance alignments. These probabilities will be briefly discussed presently.

Another line of evidence that Arp has pursued is the alignment of quasars around nearby galaxies. He has found examples of nearby galaxies that have quasars clumped about them. If quasars are at fantastic distances, then they should be randomly distributed on the sky with some average density. In the cases where quasars are clumped around galaxies, the quasar density in the vicinity of the galaxies exceeds the average quasar density by orders of magnitude. Arp concludes that such density enhancements that just happen to line up with foreground galaxies are extremely unlikely. He thinks that it is more reasonable to conclude that the quasars in question are physically related to the galaxy around which they clump, and hence are not at huge distances.

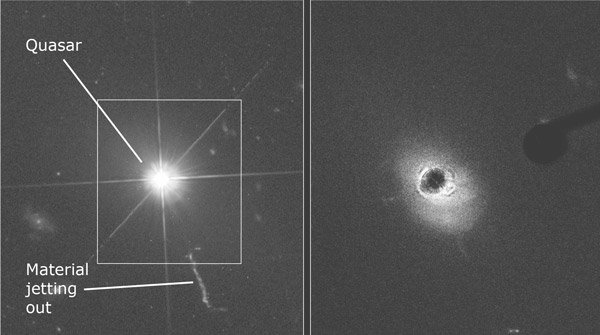

Image courtesy of NASA

The Hubble image on the left, taken with the Wide Field Planetary Camera 2, shows the brilliant quasar but little else. The diffraction spikes demonstrate the quasar is truly a point-source of light (like a star) because the black hole’s “central engine” is so compact. Once the blinding “headlight beam” of the quasar is blocked by the ACS (right), the host galaxy pops into view.

It is one thing to critique the standard theory; it is another matter to replace that understanding with your own. In Arp’s estimation, how are the quasars physically related to the host galaxies? He thinks that the quasars have been ejected from the galaxies. To support this contention, Arp has found examples of quasars that are not only clumped around a galaxy, but are along a line. In some cases this line coincides with a jet of material that is obviously shooting from the galaxy. Arp believes that quasars are ejected at high speeds from galaxies, but for some reason we only see the ones that are moving away from us. Perhaps any that are moving toward us (presumably half of them) are somehow obscured.

Arp’s critics have responded that no matter how unlikely these alignments may seem, they happened and hence have a probability of 1. They accuse Arp of improperly formulating the question. They say that he should have asked the probability before he found the data, rather than finding the data first and then asking the probability. This may seem like a picky point, but there is some validity to this criticism. Recall in chapter 2 we saw that the author of this book was quite improbable, but he happened. No one is amazed that he exists as he does, because he exists. For such a probability question to have meaning, the question should have been formulated before his conception.

Another example may illustrate this better. What is the probability that a fair coin when tossed will produce heads ten times in a row? It would be ½ to the tenth power. What is the probability that the tenth toss will be heads, given that the previous nine were all heads? Anyone who has studied probability theory will quickly realize that the probability is ½. The probability of a single toss is independent of any previous tosses. How and when one formulates the question is critical in calculating probabilities. No matter how improbable Arp’s alignments may seem, Arp’s critics insist that they happened, and so their probability is 1.

This line of reasoning confuses historical and scientific probabilities.

This line of reasoning confuses historical and scientific probabilities. Historical probabilities are either 1 or 0—either something happened or it did not. In chapter 2, I used my existence as an example. My existence is not a scientific question; it is a historical question. I exist, so the probability of my existence is 1. We can scientifically approach the question of the probability that I came about randomly, and that result is extremely remote. Science computes the probabilities of events regardless of when the calculation is done. Newspapers, historical records, or other eyewitness accounts tell us whether the historical probability is 1 or 0.

We use Arp’s approach all of the time to rule out many explanations for phenomena on such grounds. In some criminal cases DNA evidence is used. DNA testing cannot uniquely identify a person as a fingerprint can. Instead, it merely tells us how well the DNA matches the suspect and the probability that it will match another randomly selected person. Suppose that in a particular case the DNA matches the suspect and that we are told that the match would be as good in only one instance in one million. In the estimation of most people, that would be pretty convincing evidence of guilt. However, if the city in which the crime occurred has three million people, the defense could argue that there likely are two other people who could have committed the crime. Of course the prosecution would resort to a probability argument, asking how likely it is that the suspect’s and the true perpetrator’s DNA match so well. Assuming the innocence of their client, the defense attorneys could claim that as unlikely as the scientific probability is, the historical probability is 1, because it happened.

As another example, consider a bucket of sand dumped upon a table. Each time that we dump the sand the individual sand grains end up in different locations. We could dump the sand onto the table a billion times, and the sand would never fall the same way twice. In other words, every dump would be equally improbable. Since the sand from each dump must end up in some arrangement, we are not amazed when the sand falls out a certain way. While the particular result of any single dump is highly improbable, each one happens in an historical sense, so the historical probability that it happened is 1. However, suppose that you entered a room where I told you that I had just dumped the sand onto a table. Upon inspection you notice that some of the sand grains make the outline of a few letters. As you read the letters you discover that they spell out the preamble to the United States Constitution. Of course you would not believe for a second that this was the result of a random dump of sand, and you would accuse me of arranging the sand this way. However I could counter that as unlikely as it seems; it did happen, so the probability is 1.

In the face of my defiant insistence that it happened, how could you pursue the probability argument? You would calculate the scientific probability that the sand arranged itself into those words by chance. You would find that the probability is so low as to be effectively 0. You would then know that in this historical instance, the probability is 1 that the sand was arranged by hand, not randomly dumped. The critics who object to Arp’s probability argument are confusing scientific and historical probabilities.

Arp pursued his work with some of the largest telescopes in the world until 1986 when a group of influential astronomers who opposed him conspired to deny him any more telescope time. They made it clear that henceforth he could pursue more conventional research, but that his lifework was finished. Miffed at this outrageous action, Arp took an early retirement from California Institute of Technology and accepted a position in Germany. In the estimation of a minority of astronomers, Arp’s work was never successfully refuted but was merely shouted down.

Arp has called into question the assumption of whether redshifts are cosmological—that is, if distance is related to redshift via the Hubble relation. If Arp is correct, then it is not so clear that the universe is expanding. If the universe is not expanding, then the big bang is not a viable theory, since that model was developed to explain the expansion. Arp does reject the big bang, though he apparently does not reject the expansion of the universe per se. Instead, Arp thinks that while redshift often reflects distance, it does not always do so. He believes that there are some large Doppler motions superimposed on the Hubble flow.

Arp’s cosmology is a variant of the steady state. In the steady-state model, quasars cannot be distant. If quasars are all far away, then their great distances imply a look-back time. This means that we are looking at quasars not as they appear today, but as they appeared long ago. The fact that we do not see quasars nearby must mean that they no longer exist in the universe today. Therefore, the universe would look different at different times, which would violate the perfect cosmological principle, the basic assumption of the steady-state theory. This will be discussed in the next chapter.

We should restate an important point of Arp’s work. If redshifts are not cosmological in many cases, then one must doubt if redshifts are cosmological in any case. If redshifts are not cosmological, then the universe is not expanding, and the big-bang theory is not possible.

Quantized Redshifts

Starting in the 1970s an astronomer named William Tifft discovered that galaxy redshifts are not uniformly and continuously distributed, but instead are quantized. In physics something is quantized if measurements of that thing’s properties assume certain discrete values but not values in between. One of the foundations of quantum mechanics, the physics of small systems such as atoms, is that energy is quantized. That is, energy comes in small units and energy does not exist between those units. Tifft found that redshifts tend to occur in multiples of 72 km/sec. Later studies have found other multiples.

There is a bit of misconception on this point. Many erroneously think that the quantization is found in the redshifts as observed. This is not the case. The observed redshifts must be corrected for local motions. We have known for some time that the sun is orbiting in the Milky Way Galaxy at about 250 km/sec and that the Milky Way and local group of galaxies are moving as well. When these corrections are applied and a histogram of galaxy redshifts is plotted, the grouping of the redshifts at multiples of 72 km/sec is obvious. One difference between quantized redshifts and the quantization that occurs in quantum mechanic systems is that the quantization of quantum mechanical systems is absolute (there are no exceptions), while galaxy redshifts do have exceptions. That is, while quantum mechanical particles, such as electrons, are never observed to fall between two adjacent quanta, galaxy redshifts do frequently fall between the intervals of 72 km/sec.

What does the quantization of redshift mean to cosmology? It is not clear what it means. While most cosmologists doubt that quantization is real, no one has been able to discredit it. Unlike Arp’s work, this does not rely upon scientific probability arguments. Why are cosmologists so opposed to quantized redshifts? Primarily because they can find no reason for it, and the big-bang model cannot accommodate it. This whole topic is rather new and is due for more exploration. It could develop into a major problem for the big-bang theory.

On the other hand, a creation-based cosmological model that has been proposed has no problem with quantized redshifts. This model will be described in the next chapter. Just as quantized energy levels were fundamental to the establishment of quantum mechanics, perhaps quantized redshifts will be key in finding a new cosmology.

The CBR

Earlier we saw that the CBR was a good prediction of the big-bang model. At the same time, properties of the CBR may be a problem for the big bang. The early universe must have been very smooth. Otherwise, any slight density enhancements would have acted as gravitational seeds to collect matter so that most of the matter in the universe would have long ago been sucked into black holes. On the other hand, if the universe had been exactly smooth, there would not have been any gravitational seeds to produce the structure that we see. The universe appears to be delicately balanced between these two extremes. Incidentally, this is another argument for the anthropic principle that has been developed.

Image courtesy of NASA

CBR picture of the Milky Way Galaxy

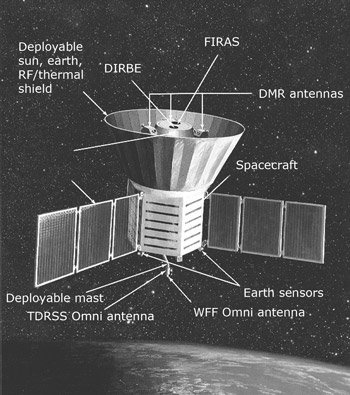

The slight density enhancements in the early universe that allegedly allowed their gravity to collect matter into galaxies and other structures that we see today are called inhomogeneities. From the big-bang theory, cosmologists managed to calculate how much inhomogeneity the early universe ought to have had to produce the universe that we see today. This inhomogeneity should have left its imprint upon the CBR. During the 1980s a space probe named COBE was built to measure the calculated inhomogeneity. As the first data from COBE were assembled in the early 1990s, we found that the CBR was perfectly smooth. Only after two years of data were examined by a very powerful statistical method did the COBE researchers claim to have found the sought-after inhomogeneity. This was hailed as confirmation of the big-bang theory, but was it?

Image courtesy of NASA

COBE satellite

The COBE experiment was specifically designed to search for the expected inhomogeneity, but the experiment failed to find it as intended. That was because the inhomogeneity eventually claimed was an order of magnitude below what was predicted. How can the prediction be confirmed when it was off by an order of magnitude? In the wake of the discovery, big-bang models have been refined to account for the lower-than-expected inhomogeneity. What has been lost in most reporting of this is that the data did not perfectly match the predictions, as is often claimed. This sort of reasoning has all-too-often happened with the big-bang model. A concordance of theory and measurement is proclaimed only after the data has been used to modify the model to “predict” the measurements.

A further question remains whether inhomogeneity has even been found. Only after very powerful statistical methods were applied to the data did anyone claim that the expected inhomogeneities had been found. No one could point to a particular direction in space and say that this was an area of higher- or lower-than-average temperature. Yet, most scientists were convinced that variations in temperature had indeed been found. Imagine if an astronomer showed you hundreds of stars in a dark sky and then proceeded to tell you that he has nearly 100% confidence that three of the stars are not stars but are actually planets. The only problem is, he cannot point to any individual star and tell you with complete assurance that it is actually a planet. Most people would consider such a proposition strange at best.

Miscellaneous Difficulties with the Big Bang

The big-bang model has become so widely accepted that few have noticed the many nagging difficulties or have realized the numerous ways in which the model has been modified to handle some of these difficulties. Some of these have been discussed previously, but they should be mentioned here as well. The big bang depends upon the cosmological principle, but is the cosmological principle true? On the local level, galaxies obviously clump into clusters, but most cosmologists have assumed that on a grand scale this clumping disappears. Extensive surveys of galaxy distributions have revealed that clumping and long strands of galaxies seem to be the norm on the largest scales that have been plumbed. The homogeneity of the universe is assumed, but all evidence indicates that the universe is not homogeneous. Or in other words, there is no evidence that the universe is indeed homogeneous. As for isotropy, the previously mentioned polarization study of distant radio sources indicates that there is some fundamental anisotropy in the universe. Therefore, there is considerable doubt that the cosmological principle upon which the big-bang model is based is true.

The COBE experiment was designed to measure the variations in the CBR that had been predicted by the standard big-bang model. COBE failed to detect the predicted variations, but studies of the data claimed to have found variations in the data at a level of an order of magnitude below those predicted by the model. Somehow this was hailed as a triumph of the big-bang theory. Few people seem to be aware that the big-bang theory was reengineered to fit the data. While discovery of variations in the CBR may be claimed as a qualitative victory, it certainly was a quantitative failure.

The horizon and flatness problems were described in a previous chapter. Inflation was created to explain these and other problems. Inflation is not universally accepted, it suffers from some difficulties of its own, and it amounts to speculation since little, if any, of it can be tested at this time. Most people who support the big bang would insist that inflation and recalculation of the big bang to fit the COBE data are merely refinements in the model. However, others legitimately view these as attempts to patch a flawed theory.

Checking Your Understanding

- What do most astronomers think that quasars are?

- What is the significance of Halton Arp’s work?

- What are quantized redshifts?

- How well did the big bang predictions match the COBE observations?

- Do we observe the universe to be homogeneous, as assumed by the big-bang theory?

Universe by Design

This book explores the universe, explaining its origins and discussing the historical development of cosmology from a creationist viewpoint.

Read Online Buy BookFootnotes

- H. Arp, Quasars, Redshifts, and Controversies (Berkeley, CA: Interstellar Media, 1987), and Seeing Red: Redshifts, Cosmology, and Academic Science (Montreal, Canada: C. Roy Keys, Inc., 2002).

Answers in Genesis is an apologetics ministry, dedicated to helping Christians defend their faith and proclaim the good news of Jesus Christ.

- Customer Service 800.778.3390

- Available Monday–Friday | 9 AM–5 PM ET

- © 2026 Answers in Genesis