Chapter 24

John R. Baumgardner, Geophysics

As a Christian who is also a professional scientist, I exult in the reality that “in six days the LORD made the heavens and the earth”. May He forever be praised.

is a technical staff member in the theoretical division of Los Alamos National Laboratory. He holds a B.S. in electrical engineering from Texas Tech University, an M.S. in electrical engineering from Princeton University, and an M.S. and Ph.D. in geophysics and space physics from UCLA. Dr. Baumgardner is the chief developer of the TERRA code, a 3-D finite element program for modeling the earth’s mantle and lithosphere. His current research is in the areas of planetary mantle dynamics and the development of efficient hydrodynamic methods for supercomputers.

I live in the town of Los Alamos, located in the mountains of northern New Mexico. It is the home of the Los Alamos National Laboratory which, with approximately 10,000 employees, is one of the larger scientific research facilities in the United States. In recent years I have debated the origins issue with a number of fellow scientists. Some of these debates have been in the form of letters to the editor in our local newspaper.1 What follows are some of the important issues as I see them.

Can random molecular interactions create life?

Many evolutionists are persuaded that the 15 billion years they assume for the age of the cosmos is an abundance of time for random interactions of atoms and molecules to generate life. A simple arithmetic lesson reveals this to be no more than an irrational fantasy.

This arithmetic lesson is similar to calculating the odds of winning the lottery. The number of possible lottery combinations corresponds to the total number of protein structures (of an appropriate size range) that are possible to assemble from standard building blocks. The winning tickets correspond to the tiny sets of such proteins with the correct special properties from which a living organism, say a simple bacterium, can be successfully built. The maximum number of lottery tickets a person can buy corresponds to the maximum number of protein molecules that could have ever existed in the history of the cosmos.

Let us first establish a reasonable upper limit on the number of molecules that could ever have been formed anywhere in the universe during its entire history. Taking 1080 as a generous estimate for the total number of atoms in the cosmos,2 1012 for a generous upper bound for the average number of interatomic interactions per second per atom, and 1018 seconds (roughly 30 billion years) as an upper bound for the age of the universe, we get 10110 as a very generous upper limit on the total number of interatomic interactions which could have ever occurred during the long cosmic history the evolutionist imagines. Now if we make the extremely generous assumption that each interatomic interaction always produces a unique molecule, then we conclude that no more than 10110 unique molecules could have ever existed in the universe during its entire history.

Now let us contemplate what is involved in demanding that a purely random process find a minimal set of about 1,000 protein molecules needed for the most primitive form of life. To simplify the problem dramatically, suppose somehow we already have found 999 of the 1,000 different proteins required and we need only to search for that final magic sequence of amino acids which gives us that last special protein. Let us restrict our consideration to the specific set of 20 amino acids found in living systems and ignore the hundred or so that are not. Let us also ignore the fact that only those with left-handed symmetry appear in life proteins. Let us also ignore the incredibly unfavorable chemical reaction kinetics involved in forming long peptide chains in any sort of plausible nonliving chemical environment.

No random process could ever hope to find even one such protein structure.

Let us merely focus on the task of obtaining a suitable sequence of amino acids that yields a 3-D protein structure with some minimal degree of essential functionality. Various theoretical and experimental evidence indicates that in some average sense about half of the amino acid sites must be specified exactly.3 For a relatively short protein consisting of a chain of 200 amino acids, the number of random trials needed for a reasonable likelihood of hitting a useful sequence is then in the order of 20100 (100 amino acid sites with 20 possible candidates at each site), or about 10130 trials. This is a hundred billion billion times the upper bound we computed for the total number of molecules ever to exist in the history of the cosmos!! No random process could ever hope to find even one such protein structure, much less the full set of roughly 1,000 needed in the simplest forms of life. It is therefore sheer irrationality for a person to believe random chemical interactions could ever identify a viable set of functional proteins out of the truly staggering number of candidate possibilities.

In the face of such stunningly unfavorable odds, how could any scientist with any sense of honesty appeal to chance interactions as the explanation for the complexity we observe in living systems? To do so, with conscious awareness of these numbers, in my opinion represents a serious breach of scientific integrity. This line of argument applies, of course, not only to the issue of biogenesis but also to the issue of how a new gene/protein might arise in any sort of macroevolution process.

One retired Los Alamos National Laboratory fellow, a chemist, wanted to quibble that this argument was flawed because I did not account for details of chemical reaction kinetics. My intention was deliberately to choose a reaction rate so gigantic (one million million reactions per atom per second on average) that all such considerations would become utterly irrelevant. How could a reasonable person trained in chemistry or physics imagine there could be a way to assemble polypeptides in the order of hundreds of amino acid units in length, to allow them to fold into their three-dimensional structures, and then to express their unique properties, all within a small fraction of one picosecond!? Prior metaphysical commitments forced the chemist in question to such irrationality.

Another scientist, a physicist at Sandia National Laboratories, asserted that I had misapplied the rules of probability in my analysis. If my example were correct, he suggested, it “would turn the scientific world upside-down.” I responded that the science community has been confronted with this basic argument in the past but has simply engaged in mass denial. Fred Hoyle, the eminent British cosmologist, published similar calculations two decades ago.4 Most scientists just put their hands over their ears and refused to listen.

In reality this analysis is so simple and direct it does not require any special intelligence, ingenuity, or advanced science education to understand or even originate. In my case, all I did was to estimate a generous upper bound on the maximum number of chemical reactions—of any kind—that could have ever occurred in the entire history of the cosmos and then compare this number with the number of trials needed to find a single life protein with a minimal level of functionality from among the possible candidates. I showed the latter number was orders and orders larger than the former. I assumed only that the likely candidates were equally so. My argument was just that plain. I did not misapply the laws of probability. I applied them as physicists normally do in their everyday work.

Just how do coded language structures arise?

One of the most dramatic discoveries in biology in the 20th century is that living organisms are realizations of coded language structures. All the detailed chemical and structural complexity associated with the metabolism, repair, specialized function, and reproduction of each living cell is a realization of the coded algorithms stored in its DNA. A paramount issue, therefore, is how do such extremely large language structures arise?

The origin of such structures is, of course, the central issue of the origin-of-life question. The simplest bacteria have genomes consisting of roughly a million codons. (Each codon, or genetic word, consists of three letters from the four-letter genetic alphabet.) Do coded algorithms which are a million words in length arise spontaneously by any known naturalistic process? Is there anything in the laws of physics that suggests how such structures might arise in a spontaneous fashion? The honest answer is simple. What we presently understand from thermodynamics and information theory argues persuasively that they do not and cannot!

Language involves a symbolic code, a vocabulary, and a set of grammatical rules to relay or record thought. Many of us spend most of our waking hours generating, processing, or disseminating linguistic data. Seldom do we reflect on the fact that language structures are clear manifestations of nonmaterial reality.

This conclusion may be reached by observing that the linguistic information itself is independent of its material carrier. The meaning or message does not depend on whether it is represented as sound waves in the air or as ink patterns on paper or as alignment of magnetic domains on a floppy disk or as voltage patterns in a transistor network. The message that a person has won the $100,000,000 lottery is the same whether that person receives the information by someone speaking at his door or by telephone or by mail or on television or over the internet.

Indeed, Einstein pointed to the nature and origin of symbolic information as one of the profound questions about the world as we know it.5 He could identify no means by which matter could bestow meaning to symbols. The clear implication is that symbolic information, or language, represents a category of reality distinct from matter and energy. Linguists today, therefore, speak of this gap between matter and meaning-bearing symbol sets as the “Einstein gulf.”6 Today in this information age there is no debate that linguistic information is objectively real. With only a moment’s reflection we can conclude that its reality is qualitatively different from the matter/energy substrate on which the linguistic information rides.

From whence, then, does linguistic information originate? In our human experience we immediately connect the language we create and process with our minds. But what is the ultimate nature of the human mind? If something as real as linguistic information has existence independent of matter and energy, from causal considerations it is not unreasonable to suspect that an entity capable of originating linguistic information is also ultimately nonmaterial in its essential nature.

An immediate conclusion of these observations concerning linguistic information is that materialism, which has long been the dominant philosophical perspective in scientific circles, with its foundational presupposition that there is no nonmaterial reality, is simply and plainly false. It is amazing that its falsification is so trivial.

The implications are immediate for the issue of evolution. The evolutionary assumption that the exceedingly complex linguistic structures which comprise the construction blueprints and operating manuals for all the complicated chemical nanomachinery and sophisticated feedback control mechanisms in even the simplest living organism—that these structures must have a materialistic explanation—is fundamentally wrong. But how, then, does one account for symbolic language as the crucial ingredient from which all living organisms develop and function and manifest such amazing capabilities? The answer should be obvious: an intelligent Creator is unmistakably required. But what about macroevolution? Could physical processes in the realm of matter and energy at least modify an existing genetic language structure to yield another with some truly novel capability, as the evolutionists so desperately want to believe?

On this question Professor Murray Eden, a specialist in information theory and formal languages at the Massachusetts Institute of Technology, pointed out several years ago that random perturbations of formal language structures simply do not accomplish such magical feats. He said, “No currently existing formal language can tolerate random changes in the symbol sequence which expresses its sentences. Meaning is almost invariably destroyed. Any changes must be syntactically lawful ones. I would conjecture that what one might call ‘genetic grammaticality’ has a deterministic explanation and does not owe its stability to selection pressure acting on random variation.”7

In a word, then, the answer is no. Random changes in the letters of the genetic alphabet have no more ability to produce useful new protein structures than could the generation of random strings of amino acids discussed in the earlier section. This is the glaring and fatal deficiency in any materialist mechanism for macroevolution. Life depends on complex nonmaterial language structures for its detailed specification. Material processes are utterly impotent to create such structures or to modify them to specify some novel function. If the task of creating the roughly 1,000 genes needed to specify the cellular machinery in a bacterium is unthinkable within a materialist framework, consider how much more unthinkable for the materialist is the task of obtaining the roughly 100,000 genes required to specify a mammal!

Despite all the millions of pages of evolutionist publications from journal articles to textbooks to popular magazine stories which assume and imply that material processes are entirely adequate to accomplish macroevolutionary miracles, there is in reality no rational basis for such belief. It is utter fantasy. Coded language structures are nonmaterial in nature and absolutely require a nonmaterial explanation.

But what about the geological/fossil record?

Just as there has been glaring scientific fraud in things biological for the past century, there has been a similar fraud in things geological. The error, in a word, is uniformitarianism. This outlook assumes and asserts that the earth’s past can be correctly understood purely in terms of present-day processes acting at more or less present-day rates. Just as materialist biologists have erroneously assumed that material processes can give rise to life in all its diversity, materialist geologists have assumed that the present can fully account for the earth’s past. In so doing, they have been forced to ignore and suppress abundant contrary evidence that the planet has suffered major catastrophe on a global scale.

Only in the past two decades has the silence concerning global catastrophism in the geological record begun to be broken. Only in the last 10–15 years has the reality of global mass extinction events in the record become widely known outside the paleontology community. Only in about the last 10 years have there been efforts to account for such global extinction in terms of high energy phenomena such as asteroid impacts. But the huge horizontal extent of Paleozoic and Mesozoic sedimentary formations and their internal evidence of high energy transport represents stunning testimony for global catastrophic processes far beyond anything yet considered in the geological literature. Field evidence indicates catastrophic processes were responsible for most, if not all, of this portion of the geological record. The proposition that present-day geological processes are representative of those which produced the Paleozoic and Mesozoic formations is utter folly.

What is the alternative to this uniformitarian perspective? It is that a catastrophe, driven by processes in the earth’s interior, progressively but quickly resurfaced the planet. An event of this type has recently been documented as having occurred on the earth’s sister planet Venus.8 This startling conclusion is based on high-resolution mapping performed by the Magellan spacecraft in the early 1990s which revealed the vast majority of craters on Venus today to be in pristine condition and only 2.5 percent embayed by lava, while an episode of intense volcanism prior to the formation of the present craters has erased all earlier ones from the face of the planet. Since this resurfacing, volcanic and tectonic activity has been minimal.

There is pervasive evidence for a similar catastrophe on our planet, driven by runaway subduction of the precatastrophe ocean floor into the earth’s interior.9 That such a process is theoretically possible has been at least acknowledged in the geophysics literature for almost 30 years.10 A major consequence of this sort of event is progressive flooding of the continents and rapid mass extinction of all but a few percent of the species of life. The destruction of ecological habitats began with marine environments and progressively enveloped the terrestrial environments as well.

Evidence for such intense global catastrophism is apparent throughout the Paleozoic, Mesozoic, and much of the Cenozoic portions of the geological record. Most biologists are aware of the abrupt appearance of most of the animal phyla in the lower Cambrian rocks. But most are unaware that the Precambrian-Cambrian boundary also represents a nearly global stratigraphic unconformity marked by intense catastrophism. In the Grand Canyon, as one example, the Tapeats Sandstone immediately above this boundary contains hydraulically transported boulders tens of feet in diameter.11

That the catastrophe was global in extent is clear from the extreme horizontal extent and continuity of the continental sedimentary deposits. That there was a single large catastrophe and not many smaller ones with long gaps in between is implied by the lack of erosional channels, soil horizons, and dissolution structures at the interfaces between successive strata. The excellent exposures of the Paleozoic record in the Grand Canyon provide superb examples of this vertical continuity with little or no physical evidence of time gaps between strata. Especially significant in this regard are the contacts between the Kaibab and Toroweap Formations, the Coconino and Hermit Formations, the Hermit and Esplanade Formations, and the Supai and Redwall Formations.12

The ubiquitous presence of crossbeds in sandstones, and even limestones, in Paleozoic, Mesozoic, and even Cenozoic rocks is strong testimony for high energy water transport of these sediments. Studies of sandstones exposed in the Grand Canyon reveal crossbeds produced by high velocity water currents that generated sand waves tens of meters in height.13 The crossbedded Coconino sandstone exposed in the Grand Canyon continues across Arizona and New Mexico into Texas, Oklahoma, Colorado, and Kansas. It covers more than 200,000 square miles and has an estimated volume of 10,000 cubic miles. The crossbeds dip to the south and indicate that the sand came from the north. When one looks for a possible source for this sand to the north, none is readily apparent. A very distant source seems to be required.

The scale of the water catastrophe implied by such formations boggles the mind. Yet numerical calculation demonstrates that when significant areas of the continental surface are flooded, strong water currents with velocities of tens of meters per second spontaneously arise.14 Such currents are analogous to planetary waves in the atmosphere and are driven by the earth’s rotation.

This sort of dramatic global-scale catastrophism documented in the Paleozoic, Mesozoic, and much of the Cenozoic sediments implies a distinctively different interpretation of the associated fossil record. Instead of representing an evolutionary sequence, the record reveals a successive destruction of ecological habitat in a global tectonic and hydrologic catastrophe. This understanding readily explains why Darwinian intermediate types are systematically absent from the geological record—the fossil record documents a brief and intense global destruction of life and not a long evolutionary history! The types of plants and animals preserved as fossils were the forms of life that existed on the earth prior to the catastrophe. The long span of time and the intermediate forms of life that the evolutionist imagines in his mind are simply illusions. And the strong observational evidence for this catastrophe absolutely demands a radically revised timescale relative to that assumed by evolutionists.

But how is geological time to be reckoned?

With the discovery of radioactivity about a century ago, uniformitarian scientists have assumed they have a reliable and quantitative means for measuring absolute time on scales of billions of years. This is because a number of unstable isotopes exist with half-lives in the billions of year range. Confidence in these methods has been very high for several reasons. The nuclear energy levels involved in radioactive decay are so much greater than the electronic energy levels associated with ordinary temperature, pressure, and chemistry that variations in the latter can have negligible effects on the former.

Furthermore, it has been assumed that the laws of nature are time invariant and that the decay rates we measure today have been constant since the beginning of the cosmos—a view, of course, dictated by materialist and uniformitarian belief. The confidence in radiometric methods among materialist scientists has been so absolute that all other methods for estimating the age of geological materials and geological events have been relegated to an inferior status and deemed unreliable when they disagree with radiometric techniques.

Most people, therefore, including most scientists, are not aware of the systematic and glaring conflict between radiometric methods and nonradiometric methods for dating or constraining the age of geological events. Yet this conflict is so stark and so consistent that there is more than sufficient reason, in my opinion, to aggressively challenge the validity of radiometric methods.

One clear example of this conflict concerns the retention of helium produced by nuclear decay of uranium in small zircon crystals commonly found in granite. Uranium tends to selectively concentrate in zircons in a solidifying magma because the large spaces in the zircon crystal lattice more readily accommodate the large uranium ions. Uranium is unstable and eventually transforms, through a chain of nuclear decay steps, into lead. In the process, eight atoms of helium are produced for every initial atom of U-238. But helium is a very small atom and is also a noble gas with little tendency to react chemically with other species. Helium, therefore, tends to migrate readily through a crystal lattice.

The conflict for radiometric methods is that zircons in Precambrian granite display huge helium concentrations.15 When the amounts of uranium, lead, and helium are determined experimentally, one finds amounts of lead and uranium consistent with more than a billion years of nuclear decay at presently measured rates. Amazingly, most of the radiogenic helium from this decay process is also still present within these crystals that are typically only a few micrometers across. However, based on experimentally measured helium diffusion rates, the zircon helium content implies a timespan of only a few thousand years since the majority of the nuclear decay occurred.

These two physical processes yield wildly disparate estimates for the age of the same granite rock.

So which physical process is more trustworthy—the diffusion of a noble gas in a crystalline lattice or the radioactive decay of an unstable isotype? Both processes can be investigated today in great detail in the laboratory. Both the rate of helium diffusion in a given crystalline lattice and the rate decay of uranium to lead can be determined with high degrees of precision. But these two physical processes yield wildly disparate estimates for the age of the same granite rock. Where is the logical or procedural error? The most reasonable conclusion in my view is that it lies in the step of extrapolating as constant presently measured rates of nuclear decay into the remote past. If this is the error, then radiometric methods based on presently measured rates simply do not and cannot provide correct estimates for geologic age.

But just how strong is the case that radiometric methods are indeed so incorrect? There are dozens of physical processes which, like helium diffusion, yield age estimate orders of a magnitude smaller than the radiometric techniques. Many of these are geological or geophysical in nature and are therefore subject to the question of whether presently observed rates can legitimately be extrapolated into the indefinite past.

However, even if we make that suspect assumption and consider the current rate of sodium increase in the oceans versus the present ocean sodium content, or the current rate of sediment accumulation into the ocean basins versus the current ocean sediment volume, or the current net rate of loss of continental rock (primarily by erosion) versus the current volume of continental crust, or the present rate of uplift of the Himalayan mountains (accounting for erosion) versus their present height, we infer time estimates drastically at odds with the radiometric timescale.16 These time estimates are further reduced dramatically if we do not make the uniformitarian assumption but account for the global catastrophism described earlier.

There are other processes which are not as easy to express in quantitative terms, such as the degradation of protein in a geological environment, that also point to a much shorter timescale for the geological record. It is now well established that unmineralized dinosaur bone still containing recognizable bone protein exists in many locations around the world.17 From my own firsthand experience with such material, it is inconceivable that bone containing such well-preserved protein could possibly have survived for more than a few thousand years in the geological settings in which they are found.

I therefore believe the case is strong from a scientific standpoint to reject radiometric methods as a valid means for dating geological materials. What then can be used in their place? As a Christian, I am persuaded the Bible is a reliable source of information. The Bible speaks of a worldwide cataclysm in the Genesis Flood, which destroyed all air-breathing life on the planet apart from the animals and humans God preserved alive in the Ark. The correspondence between the global catastrophe in the geological record and the Flood described in Genesis is much too obvious for me not to conclude that these events must be one and the same.

With this crucial linkage between the biblical record and the geological record, a straightforward reading of the earlier chapters of Genesis is a next logical step. The conclusion is that the creation of the cosmos, the earth, plants, animals, as well as man and woman by God took place, just as it is described, only a few thousand years ago, with no need for qualification or apology.

But what about light from distant stars?

An entirely legitimate question, then, is how we could possibly see stars millions and billions of light years away if the earth is so young. Part of the reason scientists like myself can have confidence that good science will vindicate a face-value understanding of the Bible is because we believe we have at least an outline of the correct answer to this important question.18

This answer draws upon important clues from the Bible, while applying standard general relativity. The result is a cosmological model that differs from the standard big-bang models in two essential respects. First, it does not assume the so-called cosmological principle and, second, it invokes inflation at a different point in cosmological history.

The cosmological principle is the assumption that the cosmos has no edge or boundary or center and, in a broad-brush sense, is the same in every place and in every direction. This assumption concerning the geometry of the cosmos has allowed cosmologists to obtain relatively simple solutions of Einstein’s equations of general relativity. Such solutions form the basis of all big-bang models. But there is growing observational evidence that this assumption is simply not true. A recent article in the journal Nature, for example, describes a fractal analysis of galaxy distribution to large distances in the cosmos that contradicts this crucial big-bang assumption.19

If, instead, the cosmos has a center, then its early history is radically different from that of all big-bang models. Its beginning would be that of a massive black hole containing its entire mass. Such a mass distribution has a whopping gradient in gravitational potential which profoundly affects the local physics, including the speed of clocks. Clocks near the center would run much more slowly, or even be stopped, during the earliest portion of cosmic history.20 Since the heavens on a large scale are isotropic from the vantage point of the earth, the earth must be near the center of such a cosmos. Light from the outer edge of such a cosmos reaches the center in a very brief time as measured by clocks in the vicinity of the earth.

In regard to the timing of cosmic inflation, this alternative cosmology has inflation after stars and galaxies form. It is noteworthy that recently two astrophysics groups studying high-redshift type Ia supernovae both concluded that cosmic expansion is greater now than when these stars exploded. The article in the June 1998 issue of Physics Today describes these “astonishing” results which “have caused quite a stir” in the astrophysics community.21 The story amazingly ascribes the cause to “some ethereal agency.”

Indeed, the Bible repeatedly speaks of God stretching out the heavens: “O Lord my God, You are very great … stretching out heaven as a curtain

” (Ps. 104:1–2); “Thus says God the Lord, who created the heavens and stretched them out

” (Isa. 42:5); “I, the Lord, am the maker of all things, stretching out the heavens by myself

” (Isa. 44:24); “It is I who made the earth, and created man upon it. I stretched out the heavens with my hands, and I ordained all their host

” (Isa. 45:12).

As a Christian who is also a professional scientist, I exult in the reality that “in six days the Lord made the heavens and the earth

” (Exod. 20:11). May He forever be praised.

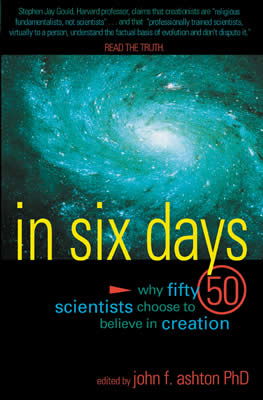

In Six Days

Can any scientist with a Ph.D. believe in the idea of a literal six-day creation? In Six Days answers this provocative question with 50 informative essays by scientists who say “Yes!”

Read Online Buy BookFootnotes

- A collection of these letters is available on the world wide web at www.nnm.com/lacf.

- C.W. Allen, Astrophysical Quantities, 3rd ed., University of London, Athlone Press, London, p. 293, 1973; M. Fukugita, C.J. Hogan, and P.J.E. Peebles, The Cosmic Baryon Budget, Astrophysical Journal 503:518–30, 1998.

- H.P. Yockey, A Calculation of the Probability of Spontaneous Biogenesis by Information Theory, Journal of Theoretical Biology 67:377–398, 1978; Hubert P. Yockey, Information Theory and Molecular Biology, Cambridge University Press, Cambridge, UK, 1992.

- Fred Hoyle and Chandra Wickramasinghe, Evolution From Space, J.M. Dent, London, 1981.

- A. Einstein, Remarks on Bertrand Russell’s Theory of Knowledge; in The Philosophy of Bertrand Russell, P.A. Schilpp (Ed.), Tudor Pub., New York, p. 290, 1944.

- John W. Oller Jr., Language and Experience: Classic Pragmatism, University Press of America, Lanham, MD, p. 25, 1989.

- M. Eden, Inadequacies of Neo-Darwinian Evolution as a Scientific Theory; in P.S. Moorhead and M.M. Kaplan (Eds.), Mathematical Challenges to the Neo-Darwinian Interpretation of Evolution, Wistar Institute Press, Philadelphia, PA, p. 11, 1967.

- R.G. Strom, G.G. Schaber, and D.D. Dawson, The Global Resurfacing of Venus, Journal of Geophysical Research 99:10899–926, 1994.

- S.A. Austin, J.R. Baumgardner, D.R. Humphreys, A.A. Snelling, L. Vardiman, and K.P. Wise, Catastrophic Plate Tectonics: A Global Flood Model of Earth History, pp. 609–621; J.R. Baumgardner, Computer Modeling of the Large-Scale Tectonics Associated with the Genesis Flood, pp. 49–62; Runaway Subduction as the Driving Mechanism for the Genesis Flood, pp. 63–75; in R.E. Walsh (Ed.), Proceedings of the Third International Conference on Creationism, Technical Symposium Sessions, Creation Science Fellowship, Inc., Pittsburgh, PA, 1994.

- O.L. Anderson and P.C. Perkins, Runaway Temperatures in the Asthenosphere Resulting from Viscous Heating, Journal of Geophysical Research 79:,2136–2138, 1974.

- S.A. Austin, Grand Canyon: Monument to Catastrophe, Interpreting Strata of the Grand Canyon, Institute for Creation Research, El Cajon, CA, p. 46–47, 1994.

- Ibid., p. 42–51.

- Ibid., p. 32–36.

- J.R. Baumgardner and D.W. Barnette, Patterns of Ocean Circulation Over the Continents During Noah’s Flood; in: R.E. Walsh (Ed.), Proceedings of the Third International Conference on Creationism, Technical Symposium Sessions, Creation Science Fellowship, Inc., Pittsburgh, PA, pp. 77–86, 1994.

- R.V. Gentry, G.L. Glish, and E.H. McBay, Differential Helium Retention in Zircons: Implications for Nuclear Waste Containment, Geophysical Research Letters 9:1129–1130, 1982.

- S.A. Austin and D.R. Humphreys, The Sea’s Missing Salt: A Dilemma for Evolutionists; in: R.E. Walsh and C.L. Brooks (Eds.), Proceedings of the Second International Conference on Creationism, Vol. II, Creation Science Fellowship, Inc., Pittsburgh, PA, pp. 17–33, 1990.

- G. Muyzer, P. Sandberg, M.H.J. Knapen, C. Vermeer, M. Collins, and P. Westbroek, Preservation of the Bone Protein Osteocalcin in Dinosaurs, Geology 20:871–874, 1992.

- D. Russell Humphreys, Starlight and Time, Master Books, Green Forest, AR, 1994.

- P. Coles, An Unprincipled Universe? Nature 391:120–121, 1998.

- D.R. Humphreys, New Vistas of Space-Time Rebut the Critics, TJ 12(1):195–212, 1998.

- B. Schwarzschild, Very Distant Supernovae Suggest That the Cosmic Expansion Is Speeding Up, Physics Today 51:17–19, 1998.

Recommended Resources

Answers in Genesis is an apologetics ministry, dedicated to helping Christians defend their faith and proclaim the good news of Jesus Christ.

- Customer Service 800.778.3390

- Available Monday–Friday | 9 AM–5 PM ET

- © 2026 Answers in Genesis